[]If you’ve never run an A/B Test (“Split Test“) on your WooCommerce website, this article is for you. Also, if you want to discover how I run my tests without third party software on this same website, feel free to read on.

[]So, why A/B Testing a WooCommerce website?

[]Because your design, development and business decisions should be based on data-driven hypotheses and experimental validation as opposed to “everyone-is-doing-this-thing-so-I-should-do-it-too” theories.

[]In this article, I’d like to introduce you to the concept of split-testing, go through some statistics formulas, describe my first ever A/B test here on Business Bloomer, and finally share the PHP snippets I use for running quick A/B tests on this same WordPress / WooCommerce website, so that you can learn a thing or two about this very important topic.

[]Enjoy!

What A/B Tests Are

[]An A/B test experiment, also called “split test”, let website visitors see either “version A” (usually, the original design) or “version B” (a better design?) of a button, section, or entire page template; and lets the website owner track views and clicks.

[]By knowing whether “version A” converts better than “version B”, or vice versa, the website owner can make a data-driven decision and keep the design as it is (version A) or change it (version B).

[]By doing so, all website enhancements are decided based on data, and not personal opinions.

[]Example: Mark believes that add-to-cart buttons with a red background convert better than the default background color. Laura generates a split-test where some customers see the original button, and some others see the red version. Once the test is over, Mark now knows that customers dislike the red color, so his opinion was wrong and no further change is required.

How To Run Split Tests

[]Now, in order to decide on and run an experiment, I suggest these 5 easy steps:

- Define the hypothesis: this could be the result of a personal opinion or, even better, a trend you’ve observed in your website analytics. For example, maybe your Checkout page is converting below 1%; by looking at other websites you notice that their Checkout page is much cleaner and has no distractions. Your hypothesis could therefore be: the WooCommerce Checkout page converts better if there is no header and footer.

- Define a relevant click event: this is strictly connected to the hypothesis you’ve made. In the example above we’re talking about the Checkout page, so the event we want to measure the hypothesis against is the number of “Place Order” button clicks. 99% of the time you’re ok with tracking simple clicks – it gets more difficult when you need to track other kinds of interactions (e.g. social shares), purchase totals, or sign ups on different websites because you will need external APIs.

- Show content conditionally: this is the beginning of the experiment. You need to find a way (see snippet below) to either show Version A or Version B of your hypothesis. In our example, Version A would be the default Checkout page with header + footer, while Version B would be the one without the header and without the footer.

- Track views and clicks: while the split test is up and running, you need a way to count Version A and Version B views, and Version A and Version B conversions (“Place Order” button clicks in our example). I’ve come up with a simple PHP counter which uses WordPress hooks and Ajax (see snippet below).

- Decide the winner: I mean, this won’t be you deciding on a winner, it will be a data-driven decision instead. Which means, at the end of the experiment, if you have a statistically significant result, you will be able to decide whether Version A or Version B is the best.

Statistical Significance Formula

[]Now, because everything depends on this “statistically significant” result, we need to study a bit of statistics fundamentals, so that we can actually determine if our A/B test is valid or not.

[]The statistical significance (variance) measures how far a set of numbers is from their average.

[]Let me immediately show you an example: Version A gets 1,000 views and 10 clicks; Version B gets 1,000 views and 11 clicks. In this case we can’t really pick a winner because website users had a very similar conversion rate – in this case the variance is very little and there is no room for a decision.

[]Now, think if Version A gets 1,000 views and 3 clicks while Version B gets 1,000 views and 25 clicks:

| Checkout Page Split Test | Version A (header & footer) | Version B (no header & footer) |

| Views | 1000 | 1000 |

| Clicks | 3 | 25 |

| No clicks (views minus clicks) | 997 | 975 |

[]Without even calculating the variance, it’s evident that the two values are so far from the mean value that this is a statistically significant result!

[]But let’s calculate the actual variance value.

Expected values

[]The variance is a measure of the distance between our observed values and their mean (expected) value. The longer the distance, the more significant the result.

[]To calculate the expected values for each observed value (click on A, click on B, no click on A, no click on B), we do a simple average between the values based on the total number of visits.

[]The expected value for our “clicks on version A” event would be:

[]EXPa = ( clicksa + clicksb ) * viewsa / ( viewsa + viewsb )

[]Now, because viewsa = viewsb in our case, this is the same as a simple average:

[]EXPa = ( clicksa + clicksb ) / 2

[]This takes us to the following expected values:

| Checkout Page Split Test | Version A (header & footer) | Version B (no header & footer) |

| Views | 1000 | 1000 |

| EXPECTED Clicks | (3+25)/2 = 14.00 | (3+25)/2 = 14.00 |

| EXPECTED No clicks | (997+975)/2 = 986.00 | (997+975)/2 = 986.00 |

Variance

[]Now we can calculate the variance of our set of results. The more spread the data, the larger the variance is in relation to the mean.

[]I compare the observed values (“O”) to the expected values (“EXP”) by subtracting the observed from the expected value, squaring the result, and dividing it by the expected value:

[]VARa = ( Oa – EXPa )2 / EXPa

[]This gives us these variance values:

| Checkout Page Split Test | Version A (header & footer) | Version B (no header & footer) |

| Views | 1000 | 1000 |

| VAR Clicks | (3-14)2/14 = 8.64 | (25-14)2/14 = 8.64 |

| VAR No clicks | (997-986)2/986 = 0.12 | (975-986)2/986 = 0.12 |

Chi-Squared value

[]By summing up the variance values, we get to calculate the “Chi-Squared” (X2)value. This gives us the “confidence” value of the experiment – the higher the confidence, the more likely users are going with Version A or Version B based on our results.

[]X2 = VARa + VARb + VARnoa + VARnob

[]In our case we get:

[]X2 = 8.64 + 8.64 + 0.12 + 0.12 = 17.52

[]Now we compare this value against the Chi-Squared table. This will tell us IF our experiment is statistically significant:

| alpha: | 0.100 | 0.050 | 0.025 | 0.010 | 0.005 |

|---|---|---|---|---|---|

| 2.706 | 3.841 | 5.024 | 6.635 | 7.879 |

[]Well, 17.52 is more than 7.879, so “the results are statistically significant with 99.5% confidence” (alpha is 0.005 so confidence is 1 – alpha), meaning there’s a 1 in 200 chance of making an error in the stated relationship.

[]The most common confidence level is 95%, so alpha is 0.050. In this case, the Chi-Square value would need to be equal to or exceed 3.841 for the results to be statistically significant.

Case Study: Business Bloomer Single Post Header Redesign

[]On Business Bloomer blog posts (like the one you’re reading!), I wanted to drive more people to the WooWeekly newsletter and clean up the header. Blog posts are – at least in my case – the only source of income for my WooCommerce consulting, so they’re really, really important.

[]I studied about 20 marketing/tech blogs and tried to find what they had in common:

Github Ahrefs Hubspot Copyhackers Growandconvert Intercom Cxl Unbounce Kajabi Copyblogger Smashingmagazine Stackoverflow Css-tricks Web.dev Freecodecamp Indiehackers Dev.to Sitepoint Hackernoon Buffer Fastcompany Coschedule

First test

[]At this stage I generated some design changes on paper, and then turned them into PHP/HTML/CSS. This was the original version: centered title, blog information on the left and calls to action on the right. Font was way too small, and colors were difficult to read due to poor contrast.

The original version (A) []I decided that the best hypothesis was to move to a centered layout, with categories and tags above the title, no Twitter share, and a newsletter sign up button instead of a plain text link.

The redesigned version (B) []The experiment started:

[]And these were the observed values:

| Blog Post Header Split Test | Version A (original) | Version B (new) |

| Views | 21342 | 21381 |

| Clicks on newsletter CTA | 3 | 21 |

[]By using the formulas we’ve seen above (expected values, variances, etc.) we calculate the Statistical Significance: 6.75 + 6.75 + 0.00 + 0.00 = 13.50 (it’s above 7.879 in the Chi-Squared table, so there is 99.5% confidence that this is the correct result).

[]Version B, the one with an actual newsletter CTA button, won by far.

Second test

[]Now that I had a winner in the centered header redesign, I wanted to maximize clicks on the newsletter CTA so I came up with a more relevant button label (“Join 18,000 readers” instead of “Stay updated“).

Version A Version B []Results:

| Blog Post Header Split Test | Version A (original) | Version B (new) |

| Views | 18187 | 18299 |

| Clicks on newsletter CTA | 14 | 4 |

[]Statistical Significance: 2.77 + 2.77 + 0.17 + 0.17 = 5.88, which means Version A, with the “STAY UPDATED” button, won with 97.5% confidence.

Third test

[]I still couldn’t trust the “Stay updated” label, so I split tested it against “Subscribe now“. This is how beautiful split tests are… they can confirm or go against your personal hypotheses!

[]I won’t share the screenshots this time, but the results were clear enough. The values were almost identical, so statistical significance was not reached, which means “Stay updated” was fine as it was. And so it stayed!

[]I also noticed no one was clicking on the byline (Rodolfo Melogli) and got rid of it.

[]This version you’re seeing now is the final one – I will of course continue split testing it if necessary or if I identify an opportunity to get more people to sign up to WooWeekly:

PHP Snippets: Showing A or B, Tracking, Reporting

[]Now the part you’ve been waiting for. How did I implement conditional content, click tracking, and reporting? Here are the different functions:

PHP Snippet 1: Show Version A or Version B

[]I came up with a smart way of showing conditional content – I do this by adding a class “A” or “B” to the BODY of my WordPress site. The system prints either A or B by looking at the timestamp – if an even number, it prints A, if odd, it prints B. This generates almost a perfect 50/50 split between users:

/** * @snippet Add Body Class “A” or “B” * @how-to Get CustomizeWoo.com FREE * @author Rodolfo Melogli * @compatible WooCommerce 8 * @donate $9 https://businessbloomer.com/bloomer-armada/ */ add_filter( ‘body_class’, ‘bbloomer_experiment_a_or_b’ ); function bbloomer_experiment_a_or_b( $classes ) { $a_or_b = time() % 2 == 0 ? ‘a’ : ‘b’; $classes[] = $a_or_b; return $classes; } []Now we need to show content based on the BODY class. This is easy in WordPress with the get_body_class() conditional and it would work like this in PHP:

$classes = get_body_class(); if ( in_array( “a”, $classes ) ) { // show Version A } elseif ( in_array( “b”, $classes ) ) { // show Version B } []For split testing a button label, for example, you’d use:

$classes = get_body_class(); if ( in_array( “a”, $classes ) ) { echo ‘STAY UPDATED‘; // Version A } elseif ( in_array( “b”, $classes ) ) { echo ‘SUBSCRIBE NOW‘; // Version B }

PHP Snippet 2: Track Views & Clicks

[]Now we need to find a way to track views and clicks for Version A and Version B. Because it’s not a lot of data, I save the counters inside get_option with “autoload” set to ‘no’.

[]The hook that triggers the counters is wp_footer. Views are counted as 1 for each wp_footer view, while clicks are counted via an Ajax listener.

/** * @snippet Count “A” & “B” Views/Clicks * @how-to Get CustomizeWoo.com FREE * @author Rodolfo Melogli * @compatible WooCommerce 8 * @donate $9 https://businessbloomer.com/bloomer-armada/ */ add_action( ‘wp_footer’, ‘bbloomer_views_click_counter_script’ ); function bbloomer_views_click_counter_script() { if ( wc_current_user_has_role( ‘administrator’ ) ) return; // EXCLUDE ADMINS $classes = get_body_class(); if ( in_array( ‘a’, $classes ) ) { if ( get_option( ‘bb_splittest_views_a’ ) ) { update_option( ‘bb_splittest_views_a’, (int) get_option( ‘bb_splittest_views_a’ ) + 1 ); } else add_option( ‘bb_splittest_views_a’, 1, ”, ‘no’ ); } elseif ( in_array( ‘b’, $classes ) ) { if ( get_option( ‘bb_splittest_views_b’ ) ) { update_option( ‘bb_splittest_views_b’, (int) get_option( ‘bb_splittest_views_b’ ) + 1 ); } else add_option( ‘bb_splittest_views_b’, 1, ”, ‘no’ ); } wc_enqueue_js( ” $(‘.track > a:not([href^=’#’])’).click(function(e){ e.preventDefault(); var url = $(this).attr(‘href’); var target = $(this).prop(‘target’); var experiment = $(‘body’).hasClass(‘a’) ? ‘a’ : ‘b’; var parentclass = $(this).parent().attr(‘class’) ? $(this).parent().attr(‘class’).split(/s+/).join(‘-‘) : ”; $.post( ‘” . ‘/wp-admin/admin-ajax.php’ . “‘, { action: ‘increment_clicks’, element: parentclass, test: experiment } ); if ( target == ‘_blank’ ) { window.open(url); } else { window.location = url; } }); ” ); } add_action( ‘wp_ajax_increment_clicks’, ‘bbloomer_increment_clicks’ ); add_action( ‘wp_ajax_nopriv_increment_clicks’, ‘bbloomer_increment_clicks’ ); function bbloomer_increment_clicks() { $element = $_POST[‘element’]; $experiment = $_POST[‘test’]; $key = base64_encode( $element . ‘-‘ . $experiment ); if ( $clicks = get_option( ‘bb_splittest_clicks’ ) ) { $clicks[$key] = isset( $clicks[$key] ) ? (int) $clicks[$key] + 1 : 1; update_option( ‘bb_splittest_clicks’, $clicks ); } else { $clicks = array(); $clicks[$key] = 1; add_option( ‘bb_splittest_clicks’, $clicks, ”, ‘no’ ); } wp_die(); } []As you can see, I made sure ONLY links where the parent class is called “track” and where the link URL is not “#” are counted.

[]Which means, in the previous example, I need to modify the template a little, and add a DIV wrapper with the “track” class if I wish to track the click event. Also you should add some descriptive class name together with the “track” class e.g. “newsletter”, which will be useful later:

$classes = get_body_class(); if ( in_array( “a”, $classes ) ) { echo ‘

‘; } elseif ( in_array( “b”, $classes ) ) { echo ‘

‘; }

PHP Snippet 3: Report

[]Now I only need to print the observed values on a custom WP dashboard screen. I’ll call it “BB Split Test” and place this under the WooCommerce submenu:

/** * @snippet A/B Test Report * @how-to Get CustomizeWoo.com FREE * @author Rodolfo Melogli * @compatible WooCommerce 8 * @donate $9 https://businessbloomer.com/bloomer-armada/ */ add_action( ‘admin_menu’, ‘bbloomer_wp_dashboard_split_test_page’, 9999 ); function bbloomer_wp_dashboard_split_test_page() { add_submenu_page( ‘woocommerce’, ‘BB Split Test’, ‘BB Split Test’, ‘manage_woocommerce’, ‘bb-split-test’, ‘bbloomer_page_views_clicks_report’, 9999 ); } function bbloomer_page_views_clicks_report() { $html = ‘

Split Test Stats

‘; $views_a = get_option( ‘bb_splittest_views_a’ ); $views_b = get_option( ‘bb_splittest_views_b’ ); $html .= ‘[]A Views: ‘ . $views_a . ‘

‘; $html .= ‘[]B Views: ‘ . $views_b . ‘

‘; $html .= ‘[]Clicks:

- ‘; if ( $clicks = get_option( ‘bb_splittest_clicks’ ) ) { foreach ( $clicks as $key => $value ) { $html .= ‘

- ‘ . base64_decode( $key ) . ‘: ‘ . $value . ‘

‘; } } $html .= ‘

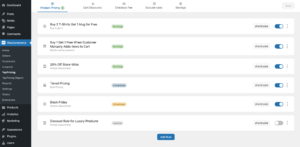

‘; echo $html; } []It should look something like this:

[]Now, you can pick a tracked event e.g. “track-relatedcta-b” and its sibling version “track-relatedcta-a“, calculate the total variance and compare it with the Chi-Squared table values.

[]In this case, 44 clicked on track-relatedcta-b, 56 clicked on track-relatedcta-a, with 22,206 and 22,376 views respectively.

[]Expected/mean values are 50 and 22,291; total variance is 0.81 + 0.81 + 0.00 + 0.00 = 1.62, which tells me the result is not very statistically significant, so the experiment failed e.g. there is no winner.

Mini-Plugin

[]I’m working on turning this tutorial into a WooCommerce mini-plugin. If you’re interested in joining the waiting list, feel free to leave a comment below and I’ll notify you in person when it’s ready.

[]The plugin will have a nice report dashboard, will calculate the statistical significance for you, and will let you save (?) past experiments.